(**) Translated with www.DeepL.com/Translator

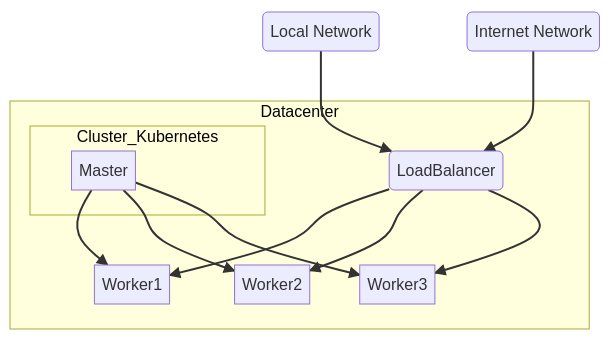

To expose the cluster services, I use a “Haproxy” server, external to the cluster, which is part of the “reverse-proxy” family. I won’t go into detail, you will find all the necessary documentation for its implementation on the internet. Once installed, the “reverse-proxy” will be the entry point that will allow access to the applications.

To get the service port in kubernetes, connect to the master :

kubeclt get service -n [namespace] [nom du service]

# ex : kubectl get service -n nextcloud nextcloud

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nextcloud NodePort 10.107.203.125 <none> 80:31812/TCP 63d

Here and in this case, the service is exposed on port 31812 of each “worker” server running the application (see the diagram above). A Pod is executed on a worker and this choice is not predictable, unless you have forced it (notion of NodeSelector), so your Haproxy rule will have to specify all the available workers in the cluster, haproxy will do the rest…

Example of haproxy configuration

I have a “nextcloud” service, a single replica and two workers (192.168.1.12 and 192.168.1.13). One of the two servers runs the pod, I don’t know which one :

[...]

frontend 192.168.1.10:443https

acl nextcloud.mondomaine.comssl hdr(host) -i nextcloud.mondomaine.com

use_backend nextcloud.mondomaine.comssl if nextcloud.mondomaine.comssl

backend nextcloud.mondomaine.comssl

mode http

enabled

balance leastconn

server worker1 192.168.1.12:[Port exposé par le cluster] check maxconn 500

server worker2 192.168.1.13:[Port exposé par le cluster] check maxconn 500

[...]

Haproxy will test the backend connection and send the external stream to the responding server.