(**) Translated with www.DeepL.com/Translator

“Docker” type containers are now part of modern infrastructures, more and more applications are being delivered in this way.

But running “Docker” in a Datacenter very quickly becomes “complicated”: Is the container started? on which server? how many replicas? are they well “load-balanced”? etc…

To allow the automated orchestration of all these “containers”, there is “Kubernetes”. There are other orchestrators such as “Docker Swarm”, “Mesos” … But Kubernetes has managed to impose itself on all the biggest Cloud providers (AWS, Microsoft, Google…) and thus allow the construction of “hybrid Clouds”.

Docker is what?

Much too long to explain, but so quick to start… The WikiPedia link that will lead you to long evenings of reading.

A container orchestrator for Docker, what is it?

A container orchestrator is an application located between the user and Docker, it allows :

(source : https://www.silicon.fr/avis-expert/orchestration-des-conteneurs-quels-cas-dusage-et-quelles-solutions - French language only)

-

Provisioning and placement of Containers: The orchestrator distributes and deploys Containers to host machines according to specified memory and CPU requirements.

-

Monitoring: An overview of the metrics and health checks of both Containers and host machines can be carried by the orchestration tool.

-

Container failover management and scalability: in case of unavailability of a host machine for example, the orchestrator allows the Container to be restarted on a second host machine. This scalability, depending on the orchestration solution used, can be manual or automatic (autoscaling).

-

Management of container updates and rollbacks**: the rolling update principle enables the orchestrator to update the containers successively and without causing application unavailability. During the update phase of a container, the other available containers are executed. If necessary, the orchestrator can also backtrack to the previous version.

-

Service Discovery and network traffic management: Containers are volatile, so the network information of each container (such as the IP address) is variable. The orchestrator offers a level of abstraction that allows one or more containers to be grouped together, allocated a fixed IP address and exposed to other containers.

And what’s Kubernetes?

Kubernetes is a project initiated by Google (2014), then made opensource and today maintained by the Cloud Native Computing Foundation (CNCF). This application operates all the features of a container orchestrator described above. To summarize, Kubernetes is in charge of managing the lifecycle of containers in a Datacenter. The project is progressing very, very fast, so it is necessary to keep an eye on this technology at all times.

Proof Of Concept (POC) MytinyDC

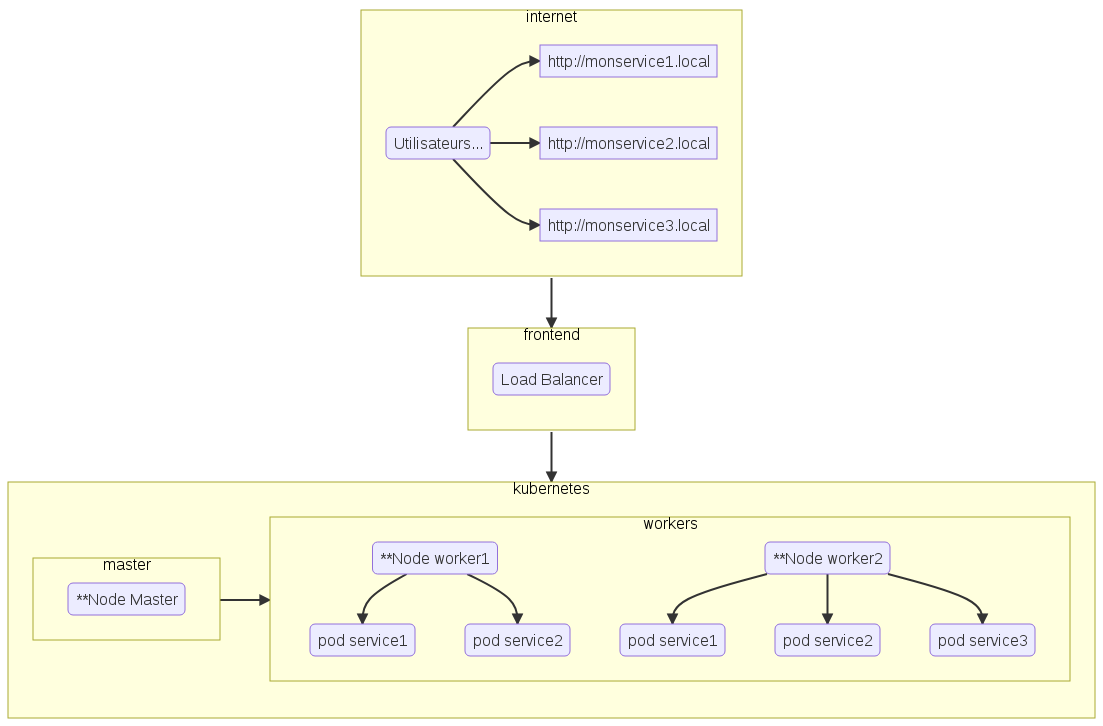

Kubernetes, it’s complicated, but if you take your time, everything will work out in the end. In a simple configuration, a Kubernetes cluster consists of a “master” type server, and one or more “worker” type servers.

- The “master” is in charge of supervising the life cycle of the containers,

- the “workers” are responsible for running the Datacenter applications delivered in the form of containers.

The POC is relatively simple, install a Kubernetes cluster on arm and amd64 platforms, deploy a test application (like nginx), check the stability of the cluster. I will then start the implementation of MytinyDC on Kubernetes with the reinstallation of Nextcloud, which I will try to scale (scaling). This will be a rather complete case: PHP-fpm server, NFS file server, Postgresql database, Apache2 server…

** Physical Server or Virtual Machine

In this context :

- monservice1 (associated with pod service 1) is “loadbalanced” on worker1 and worker2

- monservice2 (associated with pod service 2) is “loadbalanced” on worker1 and worker2

- monservice3 is not balanced - single point of failure